Introduction to Word Embedding Models

By Juniper Johnson, with Julia Flanders and Sarah Connell

Word embedding models are a set of techniques from machine learning and natural language processing (NLP) that model textual data numerically, using mathematical relationships like vectors to map semantic relationships between words in a corpus. While there are many forms of textual analysis that can be used to explore meaning across a corpus with tools like word frequencies, concordance, and topic modeling, word embedding models are able to process large amounts of textual data and predict semantic and conceptual relationships. Based on machine learning techniques to discover behavioral relationships between words, word embedding models can be used to analyze the ways in which words appear in similar contexts in a corpus, and examine what those associations might mean. For example, take the names of months like “January” and “February.” Models trained on most corpora will likely find that the closest words to these terms (that is, the words likeliest to appear in similar contexts) are other months. Besides looking for similarities between words in a corpus, word embedding models can be used to explore differences, analogies, and even conceptual spaces: by defining a cluster of key words around a topic (like words related to power or gender), word embedding models can be used for analyzing concepts across large corpora. The ability to explore large textual data sets with such density of information is one of the exciting possibilities that word embedding models offer scholars.

This introduction covers core concepts, a brief background on the development of word embeddings, and the specific implementation of word embedding models for the Women Writers Vector Toolkit (WWVT). However, this introduction is not exhaustive. For more information about any of the topics covered, please visit the annotated reading list on the WWVT website. Additionally, there is a helpful glossary of key terminology on the WWVT and an in-depth writeup on the WWVT’s methodologies. Finally, there are further recommended readings on related topics like natural language processing, machine learning, and computational textual analysis at the end of this introduction.

Background

Before delving into the specifics of word embedding models, it is important to gloss their origin in both machine learning models and natural language processing. Natural language processing is a domain of computer science that analyzes and processes human language (as contrasted with “machine” language, such as software code). NLP covers a wide range of methods and topics including parsing language for structure and syntax, the identification of meaning and affect (as in sentiment analysis), speech recognition, machine translation of different languages, and, more recently, the application of deep learning to natural language. Machine learning is an application of artificial intelligence in computer science that focuses on improved or “learned” outcomes. In machine learning, input data is often analyzed by algorithms for relationships and patterns in order to predict outputs. To facilitate this process, machine learning uses different mathematical models of information, including models called “neural networks” that are based on cognitive science and biology.

Word embedding models bring together natural language processing and machine learning to create spatial models that map textual data to mathematical models to predict semantic relationships. There are two main algorithms for word embedding models: GloVe and Word2Vec. GloVe is a machine-learning algorithm developed by the Natural Language Processing Group at Stanford University that uses weighted models of language to produce word vectors. Word2Vec was originally developed by Tomas Mikolov and other researchers at Google in 2013. It uses a neural network to learn word associations in a corpus and predict similar semantic relationships and contexts.

The Women Writers Vector Toolkit web interface uses a form of Word2Vec expressed in the R software package wordVectors developed by Ben Schmidt and Jian Li. There are other software packages expressed in other programming languages—such as the GenSim package in Python by Radim Řehůřek—that, like the wordVectors package, allow the user to have control of the model training process, set specific training parameters, and query a trained model. The WWP has created a series of introductions and templates for training and querying word embedding models utilizing the wordVectors R package—these can be downloaded here. Alternatively, the WWVT also includes a web interface so that researchers can query and interact with trained models without downloading any software.

The model training process

The following section provides an overview of the training process of word embedding models, outlines key concepts, and demonstrates possible applications. Word embedding models represent a text corpus as a complex, multidimensional set of word relationships that we can envision in a quasi-spatial manner: as a high-dimensional “cloud” in which the proximity of words within the model expresses similarity of usage. Word embedding models treat an entire corpus as a single unit of analysis. Instead of a series of documents, sections, or sentences, this model represents a corpus as a single sequence of words, disregarding grammar, syntax, punctuation, and other textual structures. After cleaning a corpus (which typically involves removing punctuation and transforming all letters to lowercase characters), the corpus is combined into one file and then passed through the wordVectors algorithm. At the start of the training process, each word is assigned a random vector (a position in the multidimensional space of the model) that is then refined during the training process, based on observations of actual word relationships. In the training process, the combined text is passed through an observational “window” that moves along the corpus, analyzing the probability of each word pairing within that window. This process continues throughout the entire corpus; one such pass constitutes one “iteration.” The full model training process involves multiple iterations, each one refining and adjusting the word in vector space based on additional observation of word relationships. Sequence and proximity are important in this process because, as a model is trained, it draws on information about word contexts to create a multi-dimensional model of the entire corpus that expresses the relationship of each word to all (or most) of the others.

A word vector, then, represents a single feature of a corpus with the behavior of a single word as a set of dimensions. Each dimension is a piece of information about the relationship between that word and others in the corpus, which helps to specify the location of that word in vector space. In theory, there could be as many dimensions to the model as there are unique words in the corpus—that is, each word’s position within the model would be expressed in relation to every other word. In practice, however, such a model contains a lot of insignificant information; instead, the “embedding” process collapses these thousands of information-poor dimensions into a smaller number—typically a few hundred—of more informationally useful dimensions. In training a model, you can set the number of dimensions as one of the parameters. A higher number of dimensions means more possible connections between words, but also requires more training time.

Word embedding models are useful for humanities scholars because of the insights they offer into the ways language is used within a corpus. They are an unusually powerful and nuanced analytical tool because of the level of computational control, complexity, and relative computational efficiency they offer. Word embedding models are relatively easy to train and even easier to alter. Because the training process is probabilistic, each model is slightly different, but by testing different parameters, scholars can refine their model to fit their corpora and research goals. Additionally, word embedding models are able to process and analyze large amounts of textual data in a relatively small amount of time. For example, it typically takes less than 15 minutes to train a model on the WWO corpus of approximately 11 million words, without using particularly specialized or powerful machines.

Querying trained models

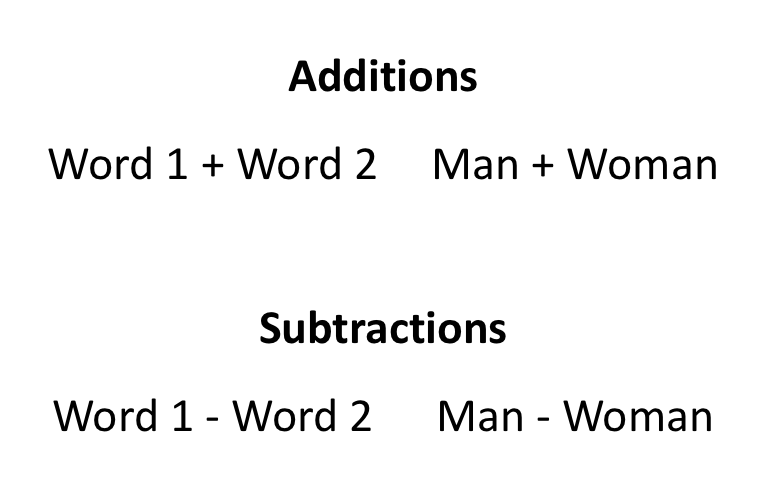

There are many different ways to query a word embedding model; the WWVT interface makes some basic queries available for exploratory investigations, including addition, subtraction, clustering, and analogies. For example, you might use addition and subtraction to look at the contexts between two terms:

In the first example, the query would give you the contexts for the combined vectors of “man” and “woman.” The second would “subtract” the context of “woman” from the vector for “man.” Another useful example is the following analogy query that allows you to subtract the contexts associated with one term from another then add the contexts associated with a third term:

The results of this query would include words that are associated with male rulership: for instance, “prince”, “monarch”, “emperor”. The “man - woman” portion of the query identifies specifically male-gendered semantic spaces, and the addition of “king” adds the semantic space of rulership. Similarly, if we reversed the first portion (“Woman - Man”) we would expect to see terms associated with female rulership: “queen”, “princess”, “empress”. This query works on the premise that there is an analogy between these two pairs of words: man: woman :: king: queen. While these examples are relatively simple, the format can be used to explore complex concepts, semantic domains, and other things across an entire corpus. To explore these and other queries in more depth, see the Women Writers Vector Toolkit interface.

For further reading on word embedding models and related topics, see the WWVT annotated source list. In addition, there are a growing collection of posts about word embedding models on the WWP Blog. These include:

- “Should Giants Be Denied Credit? Or, an Examination of Seventeenth-Century Historiographies Using Word Embedding Models” by Sarah Connell

- “Beyond the Box: Archival Descriptions of LGBT Collections” by Juniper Johnson

- “Explaining Words, In Nature and Science: Textual Analysis in Galileo’s Works” by Caterina Agostini

- “A Word Embedding Model of One’s Own: Modern Fiction from Materialism to Spiritualism” by James Clawson

- “A Most Illustrious and Distinctive Career” by Becky Standard